AI in health care promises a utopian future, but a physician argues that algorithms and avatars can never replicate the empathy, privacy, and organic intelligence of a real doctor.

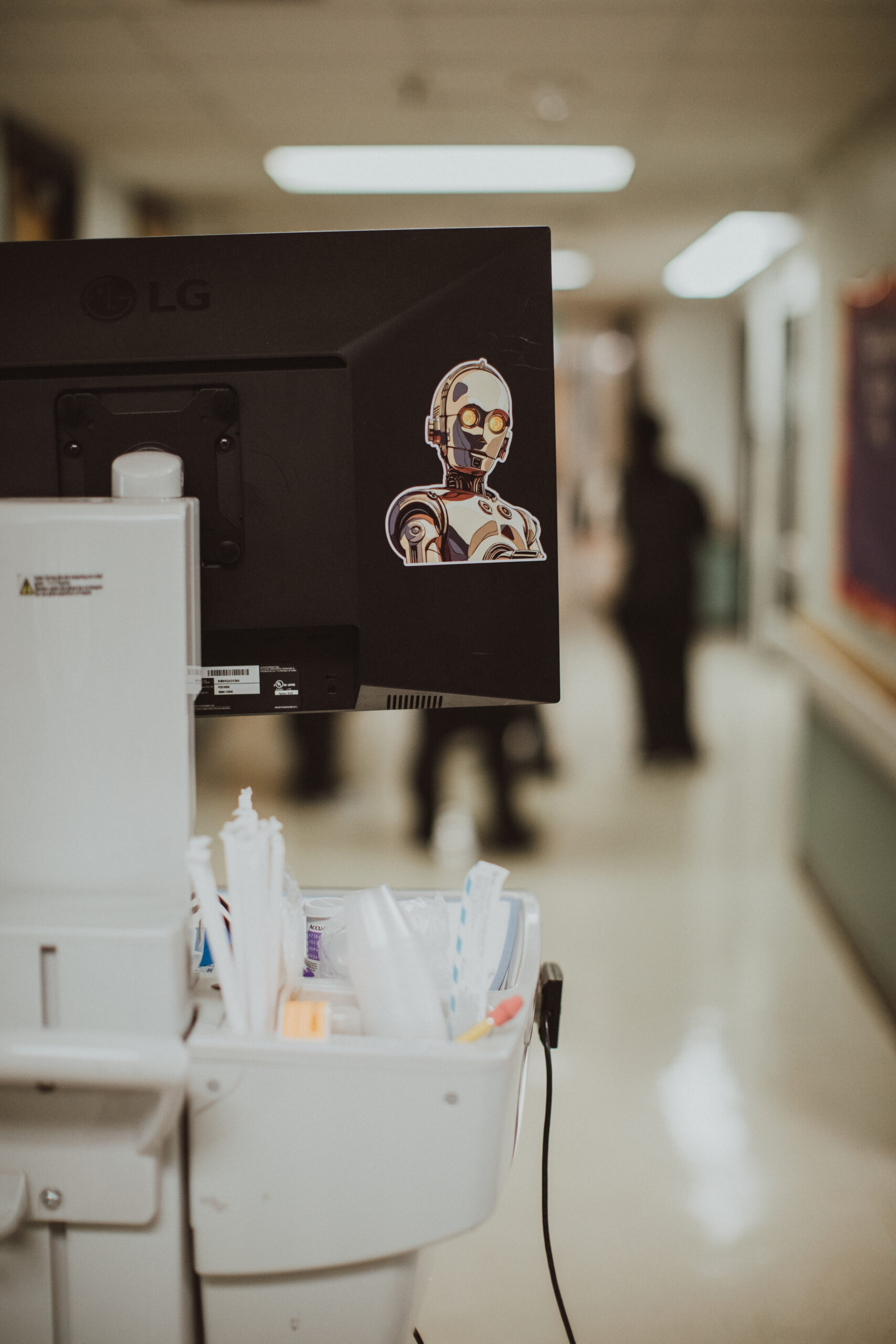

The article opens by addressing the widespread belief promoted by industry leaders that artificial intelligence (AI) is on the verge of transforming and enhancing health care. The author, a practicing physician, immediately expresses deep skepticism about this optimistic outlook. He draws a parallel to C-3PO from Star Wars, a droid known for its personality and 'desire' to do good, questioning whether AI in health care can truly embody such benevolent qualities. The author challenges the narrative that computer algorithms, despite their immense energy and water consumption, will genuinely improve the inherently human aspects of medical practice or, perhaps, even render them obsolete. He questions if a future where each person has their own AI 'C-3PO' for health care is truly desirable or feasible.

The author firmly asserts that the fundamental core of health care resides in human emotion, which he terms 'organic intelligence (OI).' This OI, he explains, is fueled by biological components like neurons, ATP, and intricate human experiences such as thoughts, feelings, and memories, with a humorous nod to caffeine in his own case. He argues that the ability of our ancestors to forge human-human relationships, driven by this organic intelligence, was crucial for survival on a 'cold, cruel planet' for millennia. The article highlights that this 'organic dyad'—a suffering person turning to a thoughtfully listening practitioner—has remained steadfast throughout history, even with the advent of scientific breakthroughs like microbes, anesthesia, antibiotics, and radiation therapies. The author critically states that society should not be 'bamboozled by computer companies' claiming their machines can perform this human function better. He emphasizes that this deeply human relationship fosters healing, community, and the alleviation of suffering, acknowledging its imperfections but stressing its irreplaceable nature. Drawing from his own medical career, the author describes how patients have confided in him about profound pain, regrets, mistakes, and personal details like blood pressure or mental health struggles. He argues that his human counsel, which involves processing both verbal and nonverbal communication and understanding the 'arc of their lives' within their unique value systems, cannot be replicated by artificial intelligence.

The author expresses profound concern over the increasing integration of AI into health care, particularly regarding the privacy and security of sensitive personal medical data. He worries that individuals are, perhaps unknowingly, volunteering highly private information—including their medical worries, medications, menstrual cycles, lab results, biopsies, mental health issues, and family cancer histories—into systems ultimately owned by profit-driven corporations. He explicitly mentions major tech companies like Amazon, Google, Facebook, Microsoft, Apple, Starlink, and OpenAI, pointing out that their primary mission is not public health, education, combating disinformation, or supporting mental well-being. He critically asks whether anyone genuinely believes that platforms like ChatGPT, reportedly used by over 230 million users weekly for health questions, possess the capacity to 'care' for them. The author highlights the critical loophole that ChatGPT, not being a 'health care provider,' is exempt from federal health privacy protections under HIPAA. He cites a statement from Mehmet Oz, the administrator of the Centers for Medicare & Medicaid Services, who suggested that AI avatars would be 'essential' for rural communities and even proposed using robots for ultrasounds on pregnant women. The author views this as a troubling intersection of technology and historical inequities, particularly in regions like West Virginia, where he practices. He challenges the notion that after decades, platforms like Facebook have improved health, and questions the wisdom of entrusting personal medical data to companies renowned for delivering consumer goods, especially when compared to 'upstart AI entities in far-flung parts of the globe' which he likens to the ominous 'Death Star' in their potential for data exploitation.

The article explains that Large Language Models (LLMs), the foundational technology behind AI, function by performing a sophisticated 'parlor trick': predicting the most probable next word in a sequence based on massive datasets. The author clarifies that this is not genuine 'writing,' and LLMs treat every word as a statistical entity, failing to grasp context, nuance, or emotional weight. This fundamental limitation, he argues, prevents AI from truly understanding or predicting the unpredictable nature of human communication, especially when individuals are narrating deeply personal and often traumatic experiences. He provides examples such as discussing a dying mother, postpartum depression, drug use, or sexual assault, where the narrator themselves might not know what words come next as they strive to 'make meaning of their experience' in the presence of a compassionate human listener. This complex, unpredictable, and often 'messy' process is defined as the essence of humanity, standing in direct opposition to algorithmic predictability. The author strongly critiques the idea of relying on AI apps like Grok (which has gained notoriety for controversial outputs) for health care, especially given the existing public distrust in human medical professionals during crises like COVID-19. He contrasts his own approach as a physician, where his responses are not pre-formed but emerge from an empathetic heart and an 'internist brain' (aided by coffee), dedicated to finding genuinely caring and kind words. He concludes with conviction that this inherent human warmth and emotional capacity, which computers and droids, even the most advanced C-3POs, can never truly possess, is indispensable for human survival and healing.