Bartz v. Anthropic, fair use, and the coming collision between AI and proprietary clinical guidelines

The article discusses the growing tension between copyright law and AI training, particularly how it might affect proprietary clinical guidelines like MCG or Interqual. It highlights the 'Bartz v. Anthropic' case, which deemed AI training on copyrighted material as 'spectacularly transformative' fair use, provided the material wasn't pirated.

MCG (Milliman Care Guidelines) and InterQual are presented as ubiquitous, proprietary clinical decision-support systems for determining 'medical necessity.' These systems reportedly hold significant market share (e.g., 80% for MCG), are inaccessible to the public, require substantial licensing fees, and are criticized for their closed nature and lack of transparency.

The article describes how these proprietary guidelines have contributed to a 'rulification' trend over the past two decades. This process has shifted medical necessity determinations from broad, individualized standards to a rigid, algorithmic, checkbox-driven exercise that patients and providers find difficult to scrutinize or challenge.

While the Anthropic case broadened the scope of fair use for AI training, it doesn't automatically grant AI companies the right to repurpose MCG and InterQual guidelines without proper licensing. The author raises questions about the specific provisions that would be included in such licensing agreements regarding AI training and reuse.

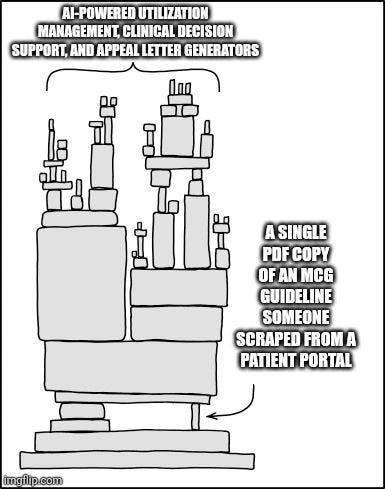

Regulatory requirements for transparency are leading to piecemeal disclosure of proprietary criteria through prior authorization denials, appeals, and transparency portals. This creates a 'slow drip' risk where protected information could be reconstructed over time. Additionally, the author acknowledges the possibility of startups bypassing legal frameworks to build tools in this space.

The author ponders whether the dominance of these proprietary systems is primarily due to genuine superior clinical insight or rather an 'artificial scarcity' of information. The article concludes by suggesting that AI models, trained on public medical literature and open guidelines, could potentially challenge the entrenched position of these proprietary systems.